Use cases for swath and time series aggregation

Use cases for satellite Swath and Time Series aggregation. Our general approach is to use the Sequence data type to aggregate granules from Swath and Time series data sets (with themselves, not to mix the two, although the latter would be possible in general). Data will be read from arrays and loaded into a Sequence object, where it will be filtered and concatenated with other sequence objects. The result will be the aggregate. Of course, this will have to be optimized...

Sample data

- Level 3 are easy to find. Some examples:

You can use Earthdata Search to find Level 3: https://search.earthdata.nasa.gov/search?m=0.0703125!0.140625!2!1!0!&ff=Subsetting+Services Click on the icon next to any dataset and click on the "API Endpoints" tab. That will give you the OPeNDAP endpoint.

- For Level 2 data, here are a couple of examples:

- GLAS: The folders are all named GLA<N>* or GLAH<N>* N >= 10 are L2 products (GLA10, GLA11, GLAH10, etc) http://nsidc.org/opendap/GLAS/contents.html

- MODAPS (MODIS): FTP server is here: ftp://nrt1.modaps.eosdis.nasa.gov/allData/1/. Log in with your URS credentials (create an account here: https://urs.earthdata.nasa.gov); Folders ending in _L2 contain level 2 data.

My notes:

- Level three data will be DAP2 Grids or DAP4 Coverages and look like they can easily be aggregated using NcML. We might think about a function that could aggregate them, but it's not in scope for this task.

- The GLAS data are stored in one-dimensional arrays. These are time series data: HDF5_GLOBAL.featureType: timeSeries. The GLAH files are HDF5 files. The one I looked at has 1Hz, 40Hz and 0.25Hz (4s) data. for each of the sample rates, there are a d_lat, d_lon and UTCTime arrays along with a large number of dependent variables in arrays. There are also some browse images. For some of the time series data there are two dims where the second dim provides cloud layer info (that is, values were gathered for cloud top and bottom for each of 10 layers.

- Suppose we want to aggregate a bunch of granules of these data? We can build a table of lat, lon, time[, cloud layer] and zero or more dependent variables for each granule, concatenate them and filter them. Optimizations include filtering before concatenating and (further) reading only data that would pass the filter in the first place.

- By including a granule name, and using nested Sequences, we can include useful metadata and make it easier to transform the resulting sequence back into an array. The nested Seq could be flattened for a return (as DAP binary or CSV).

- The MODIS data are typical MODIS L2 products with a number of dependent vars in 2D arrays and two 2D arrays, one for lat and lon.

- We could read these data into a table with lat, lon and zero or more dependent values. Concatenate and filter. Optimizations are to read just the data needs and/or filter before concatenation. Could add granule and array index information to simplify transformation back from the seq to an array

I'm going to close this spike. The larger task in this sprint for this aggregation topic is to design the function; I'm going to write up some use cases and ask Patrick if they describe his needs.

Here are some URLs I used to get data:

- L2:

- L3:

Use cases

- Satellite Swath Data Aggregation returned using CSV format

- Satellite Swath Data Aggregation returned using netCDF files

- Satellite Time Series Aggregation This use case differs only slightly from the Satellite Swath 1 use case and I think it's pretty clear how to extrapolate the 'return as netCDF' version without writing it.

Design

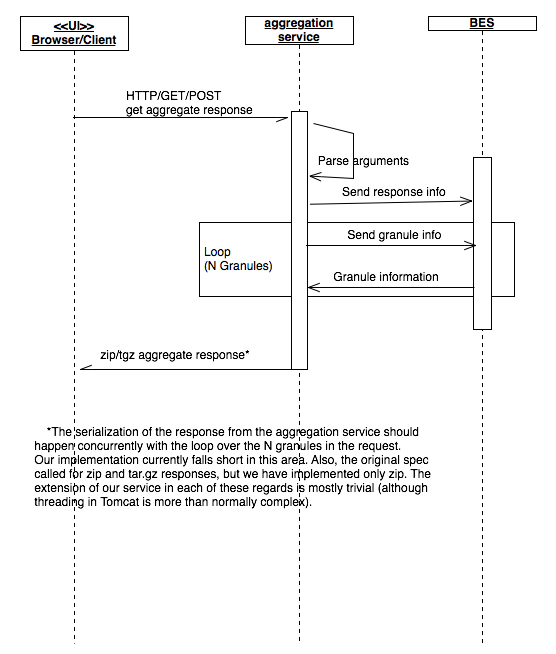

Note that this text is somewhat old and talk about only the CSV response. However, the same sequence diagram applies to the netCDF3/4 and 'file' responses.

There are two parts to both the CSV- and tar-ball-response solutions. As it happens, the two primary use cases - get swath data as CSV and get swath data in netCDF files - will likely be implemented using some different code in both the OLFS and BES. However, the narrative for both use cases' designs is roughly the same. A new web service endpoint will be made to process the requests. The requests will be made using HTTP POST when the list of granules will be enumerated in the request body and query parameters will supply the remaining information. Once the OLFS has parsed the information, it will use the BES to access the data and build the response, with two variations.

For the CSV response, the BES will actually access each granule and combine the data from them into a single response. In this case the OLFS will call the BES once, passing in each granule using a BES request with multiple containers, one per granule, and requesting the response as CSV or ASCII. In addition, the BES will need to pass in the array variables as parameters of a server function that can form them into a table and a constraint expression that will select the requested space-time values.

For the netCDF response, the OLFS will iterate over the N granules, making N discrete requests for data from the named arrays within a space-time ROI. For each request, the OLFS will specify the return format as 'netCDF'. It will then collect the resulting netCDF response documents and bundle them using tar/gz or zip and return the result to the client.

Alternate version for the netCDF return implementation: We may be able to use the BES stored result feature to eliminate the multiple trips to the BES. More investigation is needed.

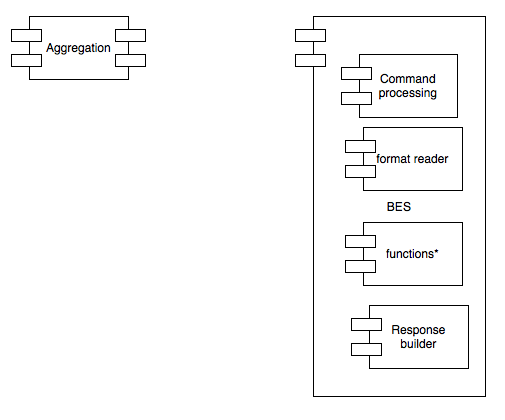

BES support for the service

Here is a Sequence diagram for the BES code used to build the responses:

In the figure, the BES iterates over each (of N) container and calls a function that takes M arrays and forms them into a single Sequence (DAP2 or DAP4; both are implemented now). One important feature that is not shown in the diagram is that all of the data from the arrays are read into the sequence object, to be filtered later. It's possible that a later optimization might drop that, and some code used to build the netCDF response might help with that - see below. The initial version of the function will read all of the data from the arrays passed to it, assuming some later step will be used to filter them.

Once the data values are all read, each of the sequences is wrapped in a Structure (this is how the BES represents containers). The AggregationServer will then take all of the containers, get their sequences, and merge them into a single sequence.

The single sequence will be the sole top-level variable in the response DDS/DMR. The ResponseBuilder object will serialize it, routing the data through a CSV/ASCII transmitter.

Issues: DAP2

- Sequence::serialize will need to be specialized

- hard

- This is required to handle the case where other code loads all of the data values (the current version assumes that each row is read one at a time and the selection criteria applied then. Rows that don't match the selection criteria are never part of the Sequence object). The function, however, assumes that the sequence will filter out the unwanted values after they have been loaded into memory. This could be quite complex.

- The AggregationServer must be written

- easy

- That the DDS with N Structures, extract the Sequences and concatenate them.

- I'm not sure where in the DAP2 the CE Selection will be performed

- spike

- This could be important

Issues: DAP4

- D4Sequence::serialize must be specialized

- easy to moderate

- Along the lines of the DAP2 case, with the only real difference that the DAP4 sequence code is much simpler.

- CE Filters have yet to be implemented in DAP4

- moderate

- Must implement grammar, evaluation.

- I'm not sure where in the code the filter operation will be performed

- spike

- However, in DAP4, a server function is defined as building a new DMR to which the CE is then applied. Not sure how containers will affect this.

- The aggregationServer must be written

- easy

- ...and likely the same code as for DAP2

- Containers are not yet supported by the DMR class

- moderate

- Could copy the DDS implementation...

BES support for the netCDF tab-ball response

We will need to write a Server function that will take the Arrays and determine how to subset them to eliminate data not in the users ROI. Unless this not required - check on that. However, beyond this, no other code is needed in the BES for this response. The iteration over the granules will be handled by the OLFS.

OLFS support for the new web services

The OLFS will need to parse the inbound HTTP POST request, with the CSV and netCDF responses presenting distinct cases.

CSV case

For this case, the OLFS will build a single BES request where the various Query String parameters are moved into obvious places in the BES setContainer, define and get elements in the request command. Two differences between this command and 'typical' DAP command are that each of granules listed in the body of the HTTP Request Document will be a container in this command, and the command will specify an AggregationServer instance that will perform the aggregation operation. However, aside from the different HTTP request type (nb: we do support POST for DAP2 requests, albeit unofficially) and the different command, the basic back and forth of the OLFS and BES is not significantly different from a normal DAP service.

netCDF case

NB: We may use the BES store result feature to build this, and that would change the following.

For this request, the OLFS will receive a request that is structurally similar to the CSV web service HTTP Request Document - a POST with granule listed in the request document body and various other parameters in the Query String. However, instead of building a single BES command, the OLFS will build and issue N commands, one for each granule. Each of these commands will request that the data be subset, maybe including a lat/lon/time constraint implemented as a server function, and returned as (returnAs) a netCDF file. As the responses come back, it will save off the resulting netcdf files so that they can be bundled up into a tar/gz or zip file and sent back to the user.